ChatGPT is just the beginning...

...and Fritz Lang saw it coming.

I have a talent for stressing myself out. Mild pain in my back or discomfort in my abdomen? Gotta be cancer. Course evaluations are up? I bet this is the semester that students finally see through my schtick. Time to smuggle a contraband pug onto a Trailways bus? No matter how many times I play “Black Betty” on my earbuds, my palms are still sweating as I hand the driver my ticket. I’d suggest, then, that you take my anxieties with a grain of salt, except the mention of salt makes me worry about my blood pressure, which, in one of biology’s cruel ironies, actually raises my blood pressure.

Sometimes, though, that oversensitive threat detector in my head picks up on something real. There were probably smarter precautions to take circa Valentine’s Day 2020 than cramming my kitchen cabinets full of ramen, but the general notion that American society wasn’t freaked out enough by what was coming proved correct. (Even a broken amygdala is right twice a day, amirite??) In his column for the New York Times last week, Ezra Klein recalled that uneasy interval just before the Covid-19 wave crashed onto our shores:

There were weeks when it was clear that lockdowns were coming, that the world was tilting into crisis, and yet normalcy reigned, and you sounded like a loon telling your family to stock up on toilet paper. There was the difficulty of living in exponential time, the impossible task of speeding policy and social change to match the rate of viral replication. I suspect that some of the political and social damage we still carry from the pandemic reflects that impossible acceleration. There is a natural pace to human deliberation. A lot breaks when we are denied the luxury of time.

But that is the kind of moment I believe we are in now.

As you no doubt gathered from the title of this post, Klein is talking here about the specter of a world remade by advances in artificial technology. Of course, a lot of people are talking about AI right now. In my own profession—uh, professing—everyone is scrambling to figure out how and whether research papers will remain a useful gauge of student performance in a world where computers can bang out a draft in a matter of seconds. You’ve likely heard about the Bing chatbot’s unsettling attempt to seduce tech journalist Kevin Roose. Less than four months after ChatGPT was unveiled to the public, OpenAI released a new, souped-up iteration just last week.

Despite artificial intelligence’s ubiquity in The Discourse, I agree with Klein that we’re not adequately grappling with the major changes that are coming down the pike. You only need to look at right-wing screeching about “woke AI” to see that normalcy reigns, stupid as our normal may be. While Ben Shapiro and Elon Musk are preoccupied with their inability to bait ChatGPT into using the n-word, these rapidly advancing technologies have the potential to upend whole professions in the next few years. Copywriters. Graphic designers. Paralegals and junior attorneys. Accountants. Translators. And, in a small gift of schadenfreude to any displaced worker who’s ever been snidely advised to “learn to code,” computer coders. AI doesn’t need to take over these jobs completely to leave millions unemployed. Instead of a copywriter taking, say, a couple hours to write a press release, they’ll soon spend just a few minutes editing the AI’s draft. Exponentially faster turnaround means you need many fewer people to do the same amount of work. Automation didn’t remove every worker from the Ford factory, but it kicked a lot of them off the assembly line.

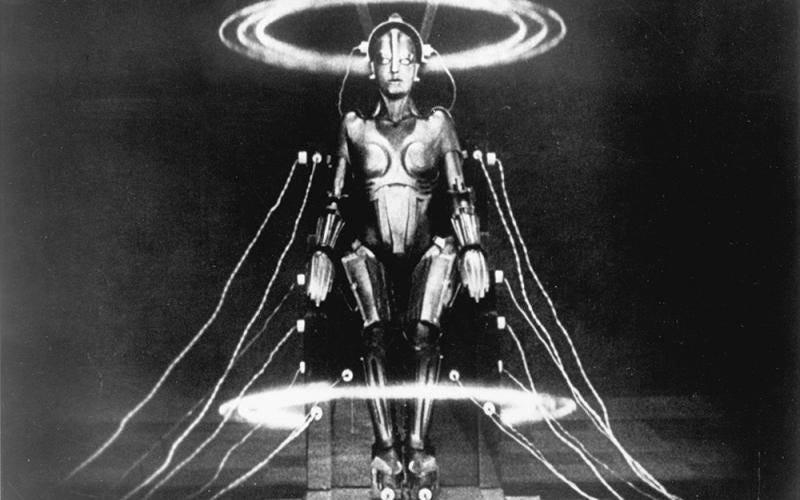

As a good Weimar film freak, I’ve been thinking about these developments in relation to Fritz Lang’s sci-fi landmark Metropolis. Especially since upwards of 75% of silent movies are lost to time, I tend to avoid confident declarations of “firsts” in film history, but you often see Metropolis touted as the earliest portrayal of artificial intelligence in world cinema. In it, Rotwang—the wild-haired archetype of a mad scientist—builds a robot that can convincingly mimic the appearance of real people. Under orders from Joh Fredersen, Metropolis’ technocrat dictator, Rotwang transforms his creation into a dead ringer for Maria, the young heroine of the city’s long-suffering working class. Joh hopes by controlling this false Maria (sometimes referred to as the Maschinenmensch or “machine person”), he can disgrace her and thereby crush the workers’ hopes for a better life. Rotwang, however, has other plans. Nursing an old grudge against Joh, he instructs the android impostor to whip the rabble into a nihilistic frenzy that will destroy Metropolis once and for all. He almost succeeds, but ultimately the false Maria is destroyed, Joh’s son Freder knocks Rotwang off a very high rooftop, and harmony is restored between labor and management.

What’s striking to me about this century-old film’s orientation toward AI is how similar it is to our own. Then as now, we can’t help but anthropomorphize. Even in its mechanized form, the Maschinenmensch is crafted in our image—two arms, two legs, a face. Once it takes on Maria’s appearance, even her most fervent followers are convinced that they’re looking at the real deal.

In our own world, there are domains where the capabilities of computers have long outstripped humanity’s. As far back as 1945, the University of Pennsylvania’s ENIAC machine could add 5,000 numbers per second. That rate of computation is, of course, impossible for a person to replicate, but few would refer to this early computer as “intelligent.” Instead, the traditional threshold for artificial intelligence is the Turing test, or the ability of a machine to engage in conversation fluently enough to fool a person into mistaking it for human. This is a threshold that the new chatbots have blown past. They were designed to. To register as “artificial intelligence,” whether in film or life, the machine in question must convince us that it is like us. We are our own benchmark for what intelligence means, and both Rotwang and OpenAI are going to design accordingly.

In his Maschinenmensch, Lang also anticipates current concerns about AI’s potential to exacerbate political divisions. Metropolis’ workers were already (justifiably) resentful of the elites up in their pleasure gardens. Robot Maria was the proverbial match that lit the tinderbox, sparking a riot that nearly destroyed their city and killed its children. OpenAI knows that its product could be put to similar use. As this 2019 report confirms, it’s known as much for years. Even in ChatGPT’s earlier iterations, there were internal concerns about its potential for “generating fake news articles or building spambots for forums and social media.” Imagemaking AI like DALL-E and Midjourney point toward a future where it’s easy to produce photos and, eventually, videos of things that never happened. As much as I’d like to see Trump in handcuffs, I still find pictures like the ones below less funny than disturbing. How long until QAnon boards start circulating “photographic proof” that Nancy Pelosi is summoning demons and Joe Biden is trafficking children?

This might be a bit of a stretch, but to my mind, even the power dynamics in Metropolis—the question of who controls the Maschinenmensch—seem to reflect our own situation. Even as the state, in the form of city overlord Joh Fredersen, attempts to use the android for its own ends, the tech is ultimately under the sway of the rouge scientist Rotwang. In the United States, at least, the government has yet to demonstrate the will or expertise to adequately regulate AI. Corporate behemoths like Microsoft and Google are racing to maximize profits, and a set of voluntary guidelines issued by the White House certainly isn’t going to slow them down. The people making the decisions are the unelected mad scientists of Silicon Valley—a clique that cares about our wellbeing about as much as Rotwang cares about the citizens of Metropolis. Think this comparison is unfair or hyperbolic? To again quote the Klein article:

In a 2022 survey, A.I. experts were asked, “What probability do you put on human inability to control future advanced A.I. systems causing human extinction or similarly permanent and severe disempowerment of the human species?” The median reply was 10 percent.

I find that hard to fathom, even though I have spoken to many who put that probability even higher. Would you work on a technology you thought had a 10 percent chance of wiping out humanity?

I sure as hell wouldn’t, and I bet you wouldn’t either. But right now, AI labs are staffed with people who are happy to plow ahead with work that they believe to have roughly the same odds of killing us all as the Nuggets have of winning the NBA championship.

My main concern, though, isn’t that ChatGPT will turn into Skynet. It’s that we’re bumbling toward a drastically altered world without pausing to prepare. As a professor, podcaster, and writer who thinks about movies for a living, my thoughts naturally turn toward education and the arts. Some of my concerns have been practical. How am I going to structure my classes as the undergraduate essay fades into obsolescence? Am I still going to have a job in five or ten years, when AI can synthesize film history and assess student performance even more cheaply than adjunct labor? Will anyone even find my newsletter or podcast on a web overrun with spontaneously generated content? Will new tools be a leap forward for the movie industry, giving filmmakers the ability to realize heretofore impossible artistic visions, or will we hand over the director’s chair to a Netflix algorithm that instantly crafts custom movies it thinks we’ll like?

But it’s also worrying on an existential level. I’ve noticed a lot of people who share my suspicion of AI comforting themselves by pointing to its current limitations. DALL-E has trouble drawing hands. ChatGPT’s sentences are sometimes redundant. Setting aside the fact that human artists famously struggle with hands and human writers frequently repeat themselves, unless the pace of development suddenly and unexpectedly drops of a cliff, the tech will have overcome these challenges in the blink of an eye. What then? If AI can produce its own Citizen Kane without a cast and crew, will anyone care about (let alone fund) human-made projects? What’s the role of a professor when students have access to interactive lectures from synthetic versions of Siegfried Kracauer, Pauline Kael, or Martin Scorsese?

I want to believe that part of what we value art and education is intrinsically human. When you watch a great movie, you’re watching a team of people—directors, writers, actors, etc.—struggle to show you something about what it is to be a person. And while I fully realize that most of my students will quickly forget what the Production Code was or how Nosferatu channeled the anxieties of post-WWI Germany, I hope that I can convey a model of curious, enthusiastic, and human engagement with the world that might linger a bit longer. Maybe removing that human factor also removes much of the value of these endeavors. I mean, we still watch the World Cup even though we could just simulate the tournament on our PlayStations.

I don’t know where this is all going. No one does. But I’m stressed about it, and I kinda think you should be, too.